Modeling fish stocks: a complex scientific quest

For decades, fish stock modeling has kept our researchers busy, at Ifremer, the International Council for the Exploration of the Sea (ICES), the institution that provides recommendations for setting quotas for the European Union, or at FAO, the United Nations Food and Agriculture Organization. From their powerful computers or aboard oceanographic vessels, the question remains the same: how to count the fish in the ocean?

From models studying fish populations to numerous limitations

But the assessment of fish stocks has not revealed all its secrets. An article from Libération recounts a campaign aboard the "Thalassa," the oceanographic vessel of Ifremer, for the assessment of anchovy stocks (and other pelagic fish – living at the water's surface – such as horse mackerel, sardines, mackerel) in the Bay of Biscay. The article discusses the difficulties faced by fisheries scientists in predicting the evolution of the anchovy stock: "the upwelling index (the intensity of the rise of cold waters along the Landes coast) seemed to allow a prediction of anchovy abundance based solely on this parameter. The scientists hit a wall, causing the fishermen to grind their teeth". Thus, in the first year, the index provided a very pessimistic forecast, and the scientists recommended a very low quota (Total Allowable Catch – TAC), even though anchovies were plentiful that year.

The scientists seem to have tried every model: generalized stock production model, virtual population analysis, Schaefer model, Bayesian model, general equilibrium model, ... Unfortunately, the stocks have not revealed all their mysteries, and the widespread overexploitation of stocks in Europe, Africa, and around the world reminds us of this fact. One thing is certain: "[it is] only after you have eaten all the fish, [that] you know how many there were."[1]

Using field knowledge

This observation leads to another: an estimate, "by the wet finger" from someone who knows the subject well is sometimes much better than a poor model. On the "Thalassa," fisheries scientist Jacques Massé carefully watches the sonar and shares his first impressions: blue patches, red dots, clouds of points, the diagnosis is quick. "Not a clairvoyant, but experienced." So, couldn't experience advantageously replace an incomplete or poorly adapted model?

Estimating rather than modeling: a methodological choice

This question keeps coming up within Vertigo Lab for our work on the economic evaluation of ecosystem services. The available data do not allow for the development of robust models, unless large-scale research projects are conducted, which are incompatible with the urgent need for results. What should be done in this case? Transfer a value from another region and a complete model and apply it without hesitation to our case? Run a reduced model with high uncertainties, risking exposure to strong criticism that is sure to come?

As part of a project on assessing the financing needs of the network of protected marine areas (MPAs) in the Mediterranean, Vertigo Lab evaluated the creation costs with a sample from this MPA network. The (easy) choice could have been to use the existing model that linked creation costs to the area to be protected. However, that did not seem logical, and the field survey largely confirmed our doubts: the estimated costs from the model were sometimes 10 times those estimated by the questionnaire sent to managers who remembered the creation phase of their MPA.

The " collective wet finger " and local knowledge

Our idea is that "expert opinion estimates" are sometimes more accurate than a model, and in any case, provide a lot of relevant information, provided that a few principles are applied. First of all, it is essential to always calibrate the level of expectation of the estimate according to the intended use of the results: needs for argumentation, technical compensation use, information or awareness, it's the intended use that shapes the model!

This also allows for greater efficiency in research and to do as much (if not better) with less.

Do not hesitate to also use the "collective wet finger" technique; this technique is presented in an article from Le Monde about sea level rise[2]. Two researchers engaged in this experiment for the rise of ocean levels by 2100. And it works!

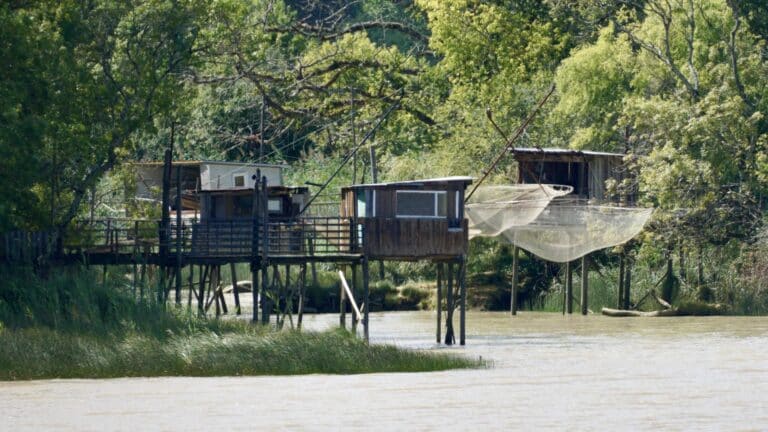

The knowledge and experience of locals are a definite asset if you know where to look: for lunch, it is sometimes (much) safer to inquire at the port bar about last night's catch than to run a complex predictive model... Science should not underestimate the power of "traditional knowledge" and should incorporate this knowledge into the scientific process, cross-referencing results with these alternative sources whenever possible.

Participatory biology centers, do-it-yourself biology, these participatory labs far from campuses that challenge sometimes outdated research, are already showing us the way...

Towards simpler, more relevant research?

Ultimately, the question today is about the relevance of "low-tech" research, which takes the time to ask the right questions and does not seek to impose a complex model at all costs, research that integrates traditional knowledge, grounded in common sense and cross-referenced sources.

References

- [1] Ransom Myers, in the Ocean, by Robert Kunzig, Actes Sud (in: Libération, "The sea, how many fish?")

- [2] Sea level rise, a "wet finger" estimate, Le Monde, 8/1/2013.